Recursive Language Models ("RLMs")

2026 has got itself a new acronym

For those of you that are new here, welcome! I’m a partner at General Catalyst focused on AI, infra, cyber, etc., at the earliest stages. You can also find me on X here: x.com/alex__mackenzie. Thank you to the ~1.6k of you following along 😄

If any of you fancy “liking” this post’s X post it genuinely goes a long way in getting this post out there 🙏🏻

My next primer will likely be on Antithesis if you feel like subscribing below to keep tabs. Other posts in the proverbial oven include some performance analysis of Ruff, Jane Street’s Magic Trace, and a primer on tinygrad. Suggestions are always welcome!

Ever since my Hyena Hierarchy primer I’ve been pretty into the research surrounding context optimisation, limits, scaffolding, etc. Largely because, whilst we’ve continued to make progress here, I still bump into context issues all the time. I often find myself feeling like I need to re-reference a specific file or function in Cursor after a certain period of time. A more “fun” example of this issue that I ran into recently was having a Udon recipe described as a Rust object (!). Rust’s memory safety is great, but I don’t need it in my noodles (there’s a sentence I never thought I’d write).

It’s also important to note that there’s a pretty material difference between a model’s “physical” maximum context window and its maximally effective context window. So we should always be a little skeptical with context window headlines.

So, over the break, I took note when I saw Alex Zhang’s & Omar Khattab’s work doing the rounds on X (full paper here). Perhaps even more so once I saw just how bullish the Prime Intellect folks were on its implications, describing RLMs as “The Paradigm of 2026”. Such is the way with AI these days, on the day I started writing this primer (Jan 7th), Cursor also released their take on “dynamic context discovery”, which, at a cursory glance, appears to rhyme with some of the thinking behind RLMs.

So yeah, let’s start the new year with some research + a new acronym for us to get acquainted with! & if you’d like to say hi, or have any thoughts, questions, etc., please do drop me a note on X: https://x.com/alex__mackenzie. Always happy to chat.

Context Rot

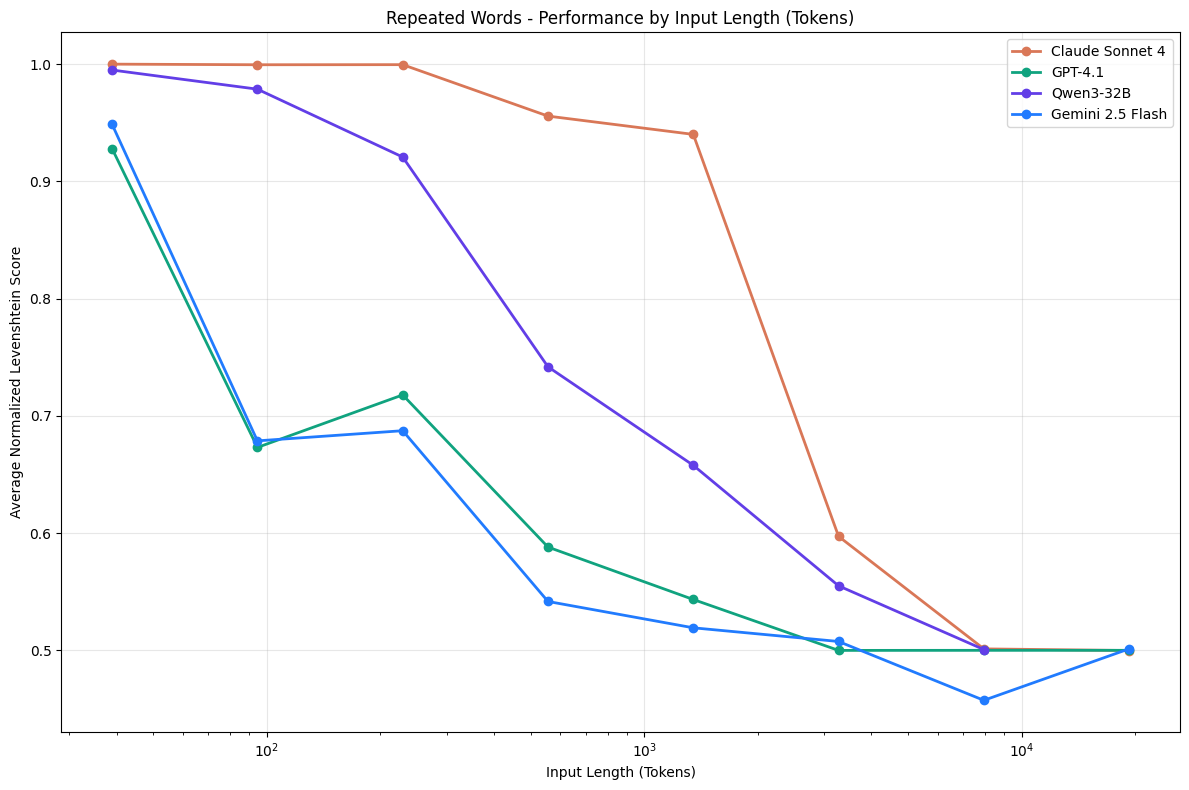

So, why are we still running into these context issues? Model performance degrades as input (“context”) length increases, a phenomenon known as “context rot”. As Alex Zhang points out, certain experiments refute this claim, but c’mon, we’ve all felt it.

To make this a little less satirical, and a tad more analytical, Chroma has done some nice work on charting performance degradation below. But, what actually causes context rot? I’ll share a few simple explanations with the usual caveat that there are a bunch of smart research directions beyond recursive language models tackling CR.

1. Attention Dilution

The softmax (wrote a post explaining softmax if you care to dig in) in attention normalizes scores across all tokens. As sequence length grows, attention weights get spread thinner. A token that’s genuinely important might get 0.3 attention weight in a 500-token context but 0.03 in a 50,000-token context. It’s worth making sure this point really clicks fyi, models aren’t “forgetting” as such, they’re distributing probability mass across more candidates.

2. Positional Encoding Degradation

Remember, “Attention” let models learn which words matter to which, regardless of distance (i.e. their respective positional encodings). The issue here is that most models use positional encodings that were trained on certain sequence lengths (i.e. we have significantly more training data that shows the relationship between tokens at positions 1–4096 than between tokens at positions 100,000–104,096). Hence, we get degradation. RoPE (rotary embeddings) and ALiBi help with extrapolation, but there’s still distributional shift.

3. Compounding Errors:

This is a pretty intuitive/obvious one. In autoregressive generation (i.e. models that predict the next token based on ~all prior tokens), small mistakes propagate. More context == more opportunities for the model to slightly mis-weight something, and for those errors accumulate. We can ofc partially attenuate this issue via chain-of-thought reasoning/scratchpadding, but, this adds additional context (see points 1 + 2 above 🥲).

++

Recursive Language Models

Ok, so, what are RLMs and how do they help deal with context rot? Oh, and, as a sidenote, using agents to generate UIs to explain technical topics is a total game changer. Would recommend opening up this interactive RLM demo (took 2 mins to spin-up?!) as we walk through the definition.

As Alex points out, the natural solution to context rot is something along the lines of, “well maybe if I split the context into two model calls, then combine them in a third model call, I’d avoid this degradation issue”. This intuition is the basis for a recursive language model.

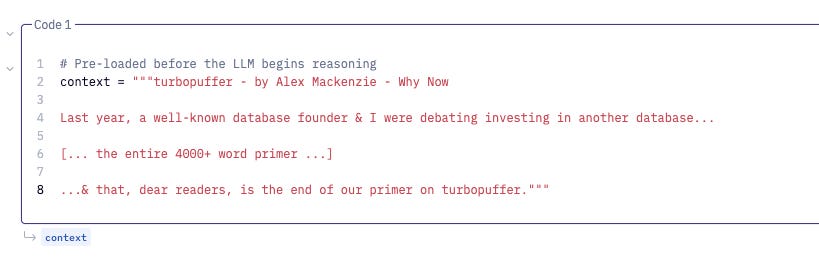

How RLMs roughly work in practice is that we convert our long context (e.g. my primer on turbopuffer) into a large python string like so (thank you Hex for the nice notebook).

This python string is now a variable called context that can be processed. Note, so far, our “Root LM” hasn’t actually seen this context, and as a result, can mark itself as safe from context rot. Alex refers to this Root LM as having a “depth” equal to 0 (depth=0). As “recursive” suggests, we’ll spin up other LMs that have depths >0, but don’t worry about this for now. Note that Cursor is doing something similar with their dynamic context discovery whereby they’re turning long tool calls into files.

Important: all our Root LM has “seen” thus far is the query (e.g. “what indexing strategies does turbopuffer use?”) that we want answered via the context, and the knowledge that context variable exists and that it can be manipulated (the LLM’s system prompt).

Next, the Root LM (again, depth=0) “peeks” at the context. What this means is it writes some basic python that, for example, helps it 1) determine the length of the context, 2) prints the first 1,500 characters of the context.

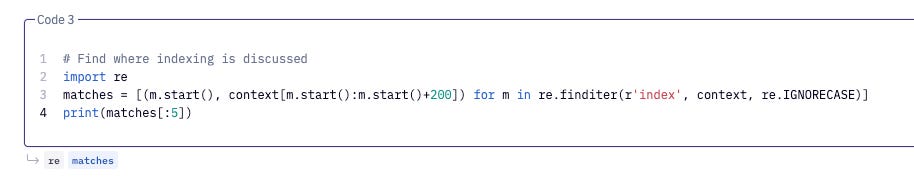

Based on the above output, our Root LM may decide to “grep” (this is a technical way of saying that we’ll do a string search) for some additional text related to the query it originally received (remember, this query was: “what indexing strategies does turbopuffer use?”). So our string search could be the below. PS - don’t get intimidated by the regex below, it never looks pretty.

All this is doing is returning back to our Root LM every occurrence of the word “index” in the context, as well as the word’s position plus a 200-character preview around each match. Again, we’ve progressively added to our Root LM’s context. The returned object looks roughly like:

[

(15234, “indexing. Ok, so, just like at the library, we use indexes in databases...”),

(16102, “index (remember, the ‘asynchronous’ guy) and understand ‘ANN’ and ‘inverted BM25’...”),

(17845, “inverted index would look like:\n\n’rubberized’: [1, 3]...”),

]With this output, our Root LM can now infer that the indexing section of our turbopuffer primer exists between characters ~15,000-~18,000. It’s context is updated accordingly. Next is where things get a bit more interesting, as we’re about to explore depth=1 (i.e. spinning up separate or “sub” LMs). See below.

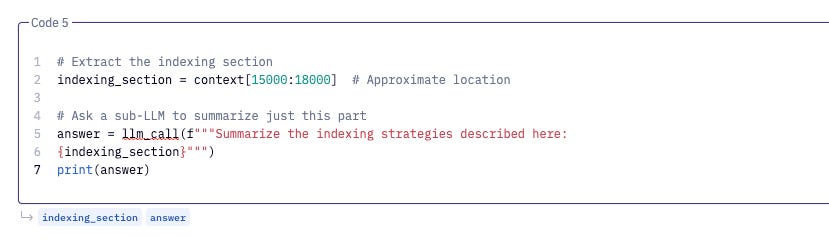

Ok, cool, to sum-up: our Root LM has written code to create a new python variable called indexing_section that contains the primer text between characters 15,000-18,000.

With this in mind, our Root LM calls a sub-LM (depth=1) and asks it to summarise the indexing strategies described in indexing_section. Through doing so, we’re minimising the amount of context our Root LM receives (e.g. perhaps our sub-LM also leverages browser-use to complement what it’s read in the primer). The sub-LM then passes its final output to the Root LM:

FINAL(”turbopuffer uses two main indexing strategies: 1) ANN (Approximate Nearest Neighbor) with SPANN optimization that stores centroids in NVMe cache and posting lists on object storage, and 2) inverted BM25 indexes for text search that map terms to document IDs with relevance scoring.”)So, what the above (hopefully) illustrates that a RLM is a general inference strategy where language models decompose and recursively interact with their input context as a variable. It’s this input context, and the LM’s multiple interactions with it, that determines what step the LM will take next vs. some pre-ordained plan (i.e. “agentic planning”). It should also be evident that the example query-chain described above requires significantly less context to generate the desired output.

So What? What Does This All Mean?

Well, on the performance side, the RLM team demonstrated that “an RLM using GPT-5-mini outperforms GPT-5 on a split of the most difficult long-context benchmark[s] [they] got [their] hands on (OOLONG) by more than double the number of correct answers, and is cheaper per query on average!”. Nice, seems like we have a pretty material solve for context rot.

When spelt out like this, RLMs sound kinda trivial though, right? Sure, but it’s first worth noting that the depth=0 “peeking”, “grepping” and depth=1 “summarisation” steps are strategies the RLM has developed based on its constraints. The RLM wasn’t instructed to first peek, then grep, etc., nor did it make an initial plan to conduct these steps sequentially.

For more semantic queries/contexts where the likes of regex fails (regex doesn’t know what indexing is, it just knows how to search for the literal word “index”), RLMs have also identified smart chunking strategies whereby they split the context into multiple “chunks”, & then spin-up independent sub-LLMs to process each chunk. Pretty cool. No doubt we’ll see plenty of other interesting behaviours emerge.

Now to zoom out, and, as Palmer would say, think about the logical end state. Whilst we’ve yet to see if RLMs get us here (although multi-threading seems quite doable?), what does it mean to have an infinitely effective context window? Truly full codebase-reasoning (e.g. “find all the ways this API contract could be violated across these 500 files”) would be an exciting step. Perhaps we’re another leap closer to long-running personal assistants, or better yet, Udon recipes without the Rust.

RLMs also hint that we’ve found a way for AI to increasingly “self-manage” its context which could be rather profound. Will spend more time thinking about what this all means. Excited for 2026.

Disclaimer